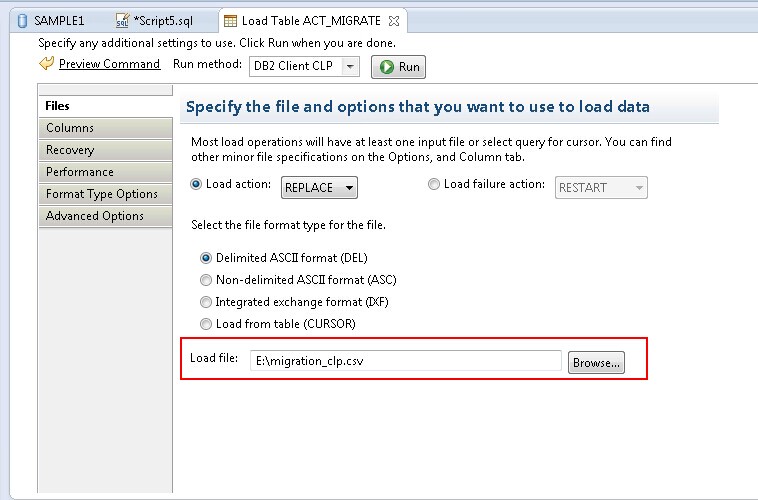

Regardless of whether you are loading in series or in parallel, you should have checks on each command exit-code, and an ability to restart from the point of failure, and to verify that the number of rows loaded matches the number of rows in each IXF file (accounting for any rejected rows). There is no benefit to this approach unless your containers are on a SAN with plenty of I/O bandwidth. For more information on getting connected, see How to Get Connected. For example if you have 32 cores, you might have 20 concurrent load jobs each loading a separate table with subtask having its own connection to the database. The first step before exporting data is to get connected to a database. More sophisticated ways include using the Db2 LOAD utility in parallel, depending on the number of cores you have available, and the avaialable I/O bandwidth. Simplest (and slowest) way is to use the Db2 LOAD utility to inject the files in series. If there are multiple IXF files then it's a normal Db behaviour to get all ixf files loaded in serial order as data may not be appropriate from different sources. If createcompositesetTrue, this will be the number of blocks put into each ‘.xdfd’ file in the composite set. DB2 is smart enough to load data in a smart way for you. You can also script this type of stuff in perl, or python, or any scripting language. If you need to import from a single IXF file then create a single IXF file from source. A list of software to perform the conversion you are interested in can be found at the top of this page. To obtain a matched structure of the data, it is necessary to use an appropriate converter. If this is not possible, we can try to independently perform the conversion process from 123 to XLS. You will need to know which file populates which table regardless of the technique you use.ĭb2 on Linux is very programmable with shell scripting (for example, with bash). To export a schema: From the Schema Extract tab, create a schema extract file or open an existing schema extract file from the Schema Extract Name drop-down. After exporting, you can easily perform IMPORT of the data in another application. The solution depends on your scripting skills, and how often you want to run the job.

Only use import if you already know the files are tiny and there is sufficient transaction logging capacity, or the tables exist but not the indexes and you want the import action to create the indexes also.

For speed reasons, you don't have to use import, you can instead use the Db2 load utility.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed